The Philosophy of Application Delivery:Was Plato a Model-Based Tester?

This is part one of three of the “Philosophy of Application Delivery” eBook. The eBook contains Curiosity Software Ireland’s musings on the nature of...

Design Complex Systems, Create Visual Models, Collaborate on Requirements, Eradicate Bugs and Deliver Quality!

| Product Overview | Solutions |

| Success Stories | Integrations |

| Book a Demo | Release Notes |

| Free Trial | Brochure |

| Pricing |

Our innovative solutions help you deliver quality software earlier, and at less cost!

![]() AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

![]() Test Case Design Generate the smallest set of test cases needed to test complex systems.

Test Case Design Generate the smallest set of test cases needed to test complex systems.

![]() Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

![]() API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

![]() Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

![]() Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

![]() Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

![]() Data Masking Identify and mask sensitive information across databases and files.

Data Masking Identify and mask sensitive information across databases and files.

![]() Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

See how we empower customer success, watch our latest webinars, read our newest eBooks and more.

![]() Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Help & Support Find a solution, request expert support and contact Curiosity.

Help & Support Find a solution, request expert support and contact Curiosity.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

![]() Integrations Explore Modeller's wide range of connections and integrations.

Integrations Explore Modeller's wide range of connections and integrations.

Curiosity are your partners for designing and building complex systems in short sprints!

![]() Meet Our Team Meet our team of world leading experts in software quality and test data.

Meet Our Team Meet our team of world leading experts in software quality and test data.

![]() Our History Explore Curiosity's long history of creating market-defining solutions and success.

Our History Explore Curiosity's long history of creating market-defining solutions and success.

![]() Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

![]() Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

![]() Careers Join our growing team of industry veterans, experts, innovators and specialists.

Careers Join our growing team of industry veterans, experts, innovators and specialists.

![]() Press Releases Read the latest Curiosity news and company updates.

Press Releases Read the latest Curiosity news and company updates.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Contact Us Get in touch with a Curiosity expert or leave us a message.

Contact Us Get in touch with a Curiosity expert or leave us a message.

Behaviour Driven Development is, at its heart, about communication. It’s not about using Gherkin to formulate specifications, or Cucumber to run tests. It’s about stakeholders across the delivery lifecycle working in parallel from a shared vision, delivering systems quickly enough that they reflect fast-changing user needs.

Done right, BDD means no more miscommunication, minimal rework, and no more thinking “If only someone had told me that!”[i] It introduces a “ubiquitous language”: one that is understood by stakeholders regardless of their background.

Using this bridge language, everyone can work in parallel from an up-to-date understanding of the desired user behaviour. System designers, developers and testers can provide rapid feedback, communicating constantly and clearly to stay aligned. This in turn avoids the frustrating delays and costly bugs caused by building and testing the ‘wrong’ system.

Sounds great, right? This two-part article considers one approach for achieving this vision in practice. First, Part one (below) considers the core goals of BDD, evaluating some approaches that are not BDD in the full sense described. Part two then sets out a model-based approach, using visual modelling to maximise communication, collaboration and automation across the delivery lifecycle.

If you can’t wait until Part 2, watchJames Walker’s talk in the DevOps Bunker. Using Visual models to Unlock BDD will provide an overview of model-based Behaviour Driven Development, setting out how it solves the challenges discussed in this article.

The driving argument will be that this alternative approach, placing flowcharts centrally, offers a practical approach to realising the benefits of Behaviour-Driven Development.

BDD aims to maintain a near-constant understanding of the desired system behaviour, with stakeholders in different roles collaborating seamlessly in parallel.

To this end, BDD aims to communicate clearly as soon as any one stakeholder’s understanding of the desired system changes. That understanding can then be updated if it has become misaligned, or it should be synthesized with a new understanding of the desired system. This makes sure that all stakeholders are pulling right directions.[ii]

Like other forms of iterative development, BDD must also be able to move this agreed vision rapidly from idea to release. The parallel design, development and testing must be at least fast enough to keep pace with changing user needs, incrementally improving the latest system to better fulfil them.

Maintaining an up-to-date understanding of changing user needs is therefore the first criterion for successful BDD. The second is the ability to deliver those fast-changing user needs accurately in production.

With these two goals, Behaviour Driven Development will rarely constitute the following three approaches:

Requirements gathering formats like Feature Files or user stories offers far greater flexibility to change than trying to maintain monolithic requirements documents. That’s good for BDD and Gherkin is a particularly common syntax for this.

A further, significant advantage of Gherkin in Behaviour-Driven contexts is that it leverages natural language, easily understandable by a range of different stakeholders.

So, how well does introducing Gherkin by itself fulfil our first criterion? How well does it retain an up-to-date, accurate and complete understanding of fast-changing desired system behaviour?

In short, not particularly well.

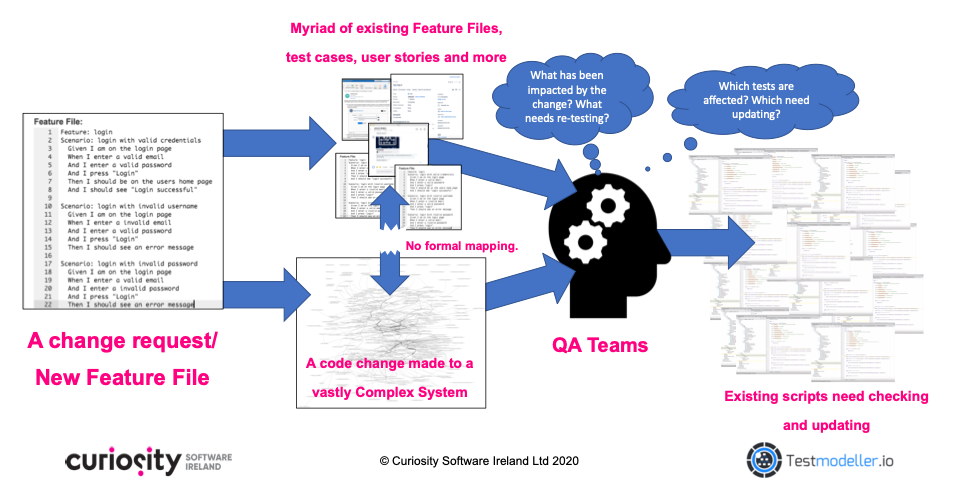

Firing off scenarios in Gherkin Feature Files does allow you to make change requests quickly; however, it rarely explains how the new Feature Files relate to previously defined scenarios. Fragmentary Feature Files in turn often simply pile up, providing little guidance on how the vast system logic should relate, or what needs to be changed across the existing system:

Figure 1 – Piling up Gherkin Feature Files does not build a coherent understanding of complex systems and can cause technical debt.

This increases the scope for incompleteness, as it is hard to spot logically gaps in a myriad of repetitive, unconnected text-based Files. Meanwhile, the mass of existing Feature Files can grow increasingly out-of-date as new ones are added. Without updating existing files, technical debt and uncertainty will grow.

The natural language used in Gherkin further increases the scope for ambiguity. Its lack of logical precision risks mistranslation as written language is converted into logically precise (and unforgiving) code. This increases the potential for miscommunication, bugs and time-consuming rework.

That’s not to say that Gherkin should not feature in Behaviour-Driven approaches. It can and, in many cases, should; however, it must be combined with a broader strategy for maintaining a logically accurate, up-to-date and complete understanding of the desired system behaviour.

This understanding must moreover be one that can be used directly in testing and development, without the risk of misunderstanding or mistranslation. For this, the Feature Files must feed a logically precise picture of the desired system behaviour, complete with dependency mapping between components. This enables accurate impact analysis whenever a new change request arrives.

In addition to maintaining an up-to-date, shared understanding of the desired system behaviour, BDD must be capable of translating this desired behaviour rapidly into code. The updated system must be released quickly enough to keep pace with fast-changing user needs.

Today, this demands rapid iterative delivery, developing and testing in parallel from Behaviour-Driven specifications. QA must be capable of building up-to-date tests to ensure the quality of each release, often building test suites while or before code is being developed.

However, rigorously testing fast-changing systems in short iterations is frequently made impossible by time-consuming dependencies and cumbersome manual processes. These swallow time and undermine test coverage, forcing test execution behind releases. This in turn reduces the likelihood that production systems will accurately reflect the desired system behaviour.

I’ve written a fair amount previously on some of these challenges, each of which could constitute their own article. For now, I’ve summarised five of the most common QA barriers to BDD, linking to additional resources on each. Each of these bottlenecks is capable of failing the second criterion of BDD set out above:

Today, regression suites can contain thousands of tests, making it impossible to manually update all existing artefacts after every change. In turn, test teams are forced to focus either on testing newly introduced logic, or running the regression needed to identify integration errors and unexpected impact of system change. Either way, system logic goes untested before a release, exposing systems to damaging bugs.

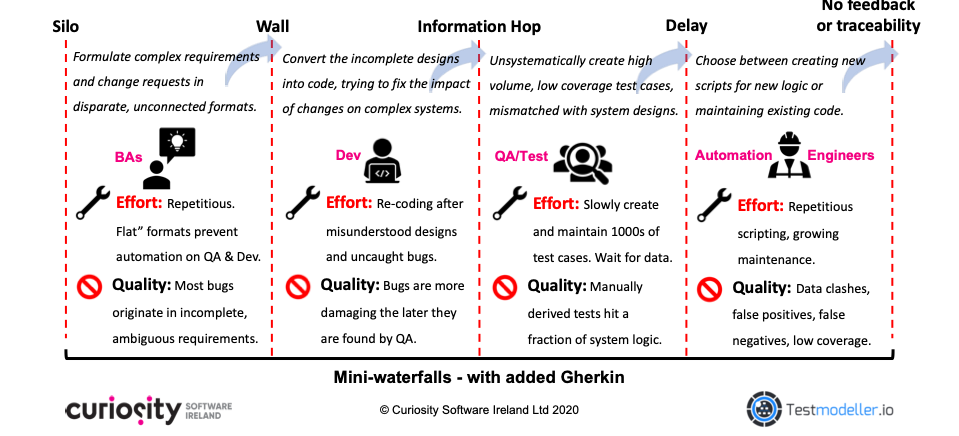

Again, the format of system requirements are frequently a root cause of these delays. The lack of logical precision and ‘flat’ format of text-heavy Gherkin Feature Files prevents automated or systematic test design. Meanwhile, a lack of dependency mapping between Gherkin and a lack of traceability to test artefacts prevents automated maintenance of test assets after a system changes.

QA teams instead manually translate the Gherkin into test artefacts, often waiting for code to be developed to get a better understanding of the system under test. This prevents parallelisation of testing and development, pushing testing ever-later. These ‘mini-Waterfalls’ – in which systems are designed, then developed, and only then tested – prevents the rapid, iterative delivery necessary for effective BDD:

Figure 2 – Mini-waterfalls prevent accurate, in-sprint development and testing of fast-changing systems.

A requirements-driven approach to test design would instead be a truly behaviour-driven approach, by virtue of the requirements themselves being Behaviour-Driven. If this same approach to test design and maintenance is automated, it can furthermore remove the bottlenecks set out in this section. Rigorous QA can then occur in-sprint, detecting damaging defects before each release. Such an approach will be set out in Part Two.

To conclude Part One of this article, let’s consider an unfortunately common alternative to tackling the challenges to in-sprint testing. This alternative allows testing to roll continuously over to the next sprint, growing further behind development. Sometimes, ‘newly’ released functionality might be tested sprints (and months) after its release.

As emphasised above, this approach is not compatible with truly Behaviour-Driven Development. Releasing untested functionality to production leaves fast-changing systems exposed to damaging bugs, failing the second criterion defined above. These bugs not only cost up to 10 times more to fix than those found during QA,[iv] but they are almost certainly not compatible with the desired user behaviour.

So, what does a truly behaviour-driven approach to software delivery look like? Part two of this article will set out a model-based approach to capturing fast-changing user requirements accurately and comprehensively, before using those same models to drive accurate development and rigorous in-sprint testing.

Can’t wait until part 2? Then watch James Walker’s talk in the DevOps Bunker: Using Visual models to Unlock BDD. The live demo and discussion will provide an overview of model-based BDD, setting out how it solves the challenges discussed in this article.

[i] Dan North (2006), Introducing BDD, retrieved from https://dannorth.net/introducing-bdd/ on August 5th 2020

[ii] Of course, not everyone needs to be pulling in one, homogenous direction. There will be exploration and experimentation, as well as divergent understands to be explored. Yet, all of these understandings should be valid and backed by a chance of successfully implementing the desired user behaviour; working from a misunderstanding does not achieve this.

[iii] Sogeti, Capgemini (2019), World Quality Report 2019-20, retrieved from https://www.capgemini.com/gb-en/research/world-quality-report-2019/ on August 5th 2020.

[iv] National Institute of Standards and Technology, cited in Deep Source (2019), Exponential Cost of Fixing Bugs, retrieved from https://deepsource.io/blog/exponential-cost-of-fixing-bugs/ on August 5th 2020.

This is part one of three of the “Philosophy of Application Delivery” eBook. The eBook contains Curiosity Software Ireland’s musings on the nature of...

Software delivery teams across the industry have embraced agile delivery methods in order to promote collaboration between teams and deliver new...

The past decade has seen me sell one start-up, join a multi-national, and co-found my latest venture: Curiosity Software Ireland. I am grateful for...

Welcome to part 2/5 of 5 Reasons to Model During QA! Part one of this series discussed how formal modelling enables “shift left” QA. It discussed how...

Welcome to part 3/5 of 5 Reasons to Model During QA! Part one of this series discussed how modelling enables “shift left” QA, eradicating potentially...

Welcome to the final instalment of 5 Reasons to Model During QA! If you have missed any of the previous four articles, jump back in to find out how...

Application development and testing has been revolutionised in the past several years with artifact and package repositories, enabling delivery of...

Behaviour-Driven Development (BDD) emerged in 2006 [1], partly in response to perennial test and development painpoints lingering in spite of “agile”...

Continuous Integration (CI) and Continuous Delivery or Continuous Deployment (CD) pipelines have been largely adopted across the software development...