Models in testing: Where’s their value?

System models: there’s lots of different techniques today, but where is their true value for testers and developers? Here’s five ways that I’ve found...

Design Complex Systems, Create Visual Models, Collaborate on Requirements, Eradicate Bugs and Deliver Quality!

| Product Overview | Solutions |

| Success Stories | Integrations |

| Book a Demo | Release Notes |

| Free Trial | Brochure |

| Pricing |

Our innovative solutions help you deliver quality software earlier, and at less cost!

![]() AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

AI Accelerated Quality Scalable AI accelerated test creation for improved quality and faster software delivery.

![]() Test Case Design Generate the smallest set of test cases needed to test complex systems.

Test Case Design Generate the smallest set of test cases needed to test complex systems.

![]() Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

Data Subsetting & Cloning Extract the smallest data sets needed for referential integrity and coverage.

![]() API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

API Test Automation Make complex API testing simple, using a visual approach to generate rigorous API tests.

![]() Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

Synthetic Data Generation Generate complete and compliant synthetic data on-demand for every scenario.

![]() Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

Data Allocation Automatically find and make data for every possible test, testing continuously and in parallel.

![]() Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

Requirements Modelling Model complex systems and requirements as complete flowcharts in-sprint.

![]() Data Masking Identify and mask sensitive information across databases and files.

Data Masking Identify and mask sensitive information across databases and files.

![]() Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

Legacy TDM Replacement Move to a modern test data solution with cutting-edge capabilities.

See how we empower customer success, watch our latest webinars, read our newest eBooks and more.

![]() Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

Events Join the Curiosity team in person or virtually at our upcoming events and conferences.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Help & Support Find a solution, request expert support and contact Curiosity.

Help & Support Find a solution, request expert support and contact Curiosity.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

Documentation Get started with the Curiosity Platform, discover our learning portal and find solutions.

![]() Integrations Explore Modeller's wide range of connections and integrations.

Integrations Explore Modeller's wide range of connections and integrations.

Curiosity are your partners for designing and building complex systems in short sprints!

![]() Meet Our Team Meet our team of world leading experts in software quality and test data.

Meet Our Team Meet our team of world leading experts in software quality and test data.

![]() Our History Explore Curiosity's long history of creating market-defining solutions and success.

Our History Explore Curiosity's long history of creating market-defining solutions and success.

![]() Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

Our Mission Discover how we aim to revolutionize the quality and speed of software delivery.

![]() Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

Our Partners Learn about our partners and how we can help you solve your software delivery challenges.

![]() Careers Join our growing team of industry veterans, experts, innovators and specialists.

Careers Join our growing team of industry veterans, experts, innovators and specialists.

![]() Press Releases Read the latest Curiosity news and company updates.

Press Releases Read the latest Curiosity news and company updates.

![]() Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

Success Stories Learn how our customers found success with Curiosity's Modeller and Enterprise Test Data.

![]() Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

Blog Discover software quality trends and thought leadership brought to you by the Curiosity team.

![]() Contact Us Get in touch with a Curiosity expert or leave us a message.

Contact Us Get in touch with a Curiosity expert or leave us a message.

13 min read

Thomas Pryce

03 December 2019 14:12:46 GMT

Thomas Pryce

03 December 2019 14:12:46 GMT

UI Testing is often considered the most intuitive for human testers. UIs are built for human use and testers can thus act as a human would. Otherwise, they can code an automation framework to enter a few data values. A couple of clicks later, and the UI is tested! Simple right?

This attitude underestimates the number of factors that must be validated before a UI can be considered ‘tested’. This article focuses on these factors. It considers the bottlenecks of a siloed approach that validates each factor separately. It then calls for a unified approach that can improve both the efficiency and rigour of UI.

The article then sets out a practical guide for creating such a ‘one-stop-shop’ in UI Testing. This shift-left approach maintains several types of UI Test from central flowchart models. It makes rigorous, multi-pronged UI testing possible, even within short iterations.

Testing a UI completely requires a diversity of testing activities. They include:

Functional testing. UI tests must check that the logic of a UI works as intended. Functional tests make sure that a UI produces the right response to certain actions. The tests compare how a UI responds to inputted data against a set of pre-defined expected results. This must extend beyond the UI itself, testing the APIs and databases that underpin a screen.

Usability. Even with impeccable functional logic, a UI is only valuable if users can easily access it. UI testing must make sure that a UI has an intuitive design, and that the code reflects its design. Usability testing must furthermore test against a representative range of users. That includes those with accessibility requirements like impaired vision.

Performance. A UI must also perform efficiently in all production-like conditions. UI testing must test a UI against the full range of possible production workloads. Usability and performance testing are often performed as an afterthought to functional testing. Yet, ‘non-functional’ bugs are often the most high-impact. Slow load times impact every user on a site, and the majority of mobile users leave within 3 seconds.[1] Usability bugs likewise drive users away, for instance if they cannot find the button to progress to the next screen.

This list covers only a fraction of the testing types that make up complete UI testing. Testers must also perform each against a range of browsers, devices and applications. UI testing is not so “simple” after all.

The various types of UI testing are often performed in isolation. This creates several siloes, each using a range of point solutions. Manual testers often test usability, working either with a finished UI or wireframes. Functional testing might also be manual, or scripted frameworks might be used. Performance testing then requires another set of scripts, relying on yet another framework.

These silos create significant duplicate effort that prevents complete testing within short iterations. Each contains a range of manual labours, many of which are the same across silos. Every time a UI changes, each silo then repeats these efforts.

A better approach creates several types of UI test from one central artefact. Maintaining this ‘one-stop-shop’ in turn keeps a range of UI tests up-to-date. This enables rigorous UI testing, even when faced with fast-changing UIs.

A Model-Based approach for creating such a one-stop-shop is set out below. It focuses for brevity on functional testing, visual validation, and Load testing. The same approach is easily extendable, weaving in API tests, database tests, and more. The approach then leads to complete UI testing, even in short iterations.

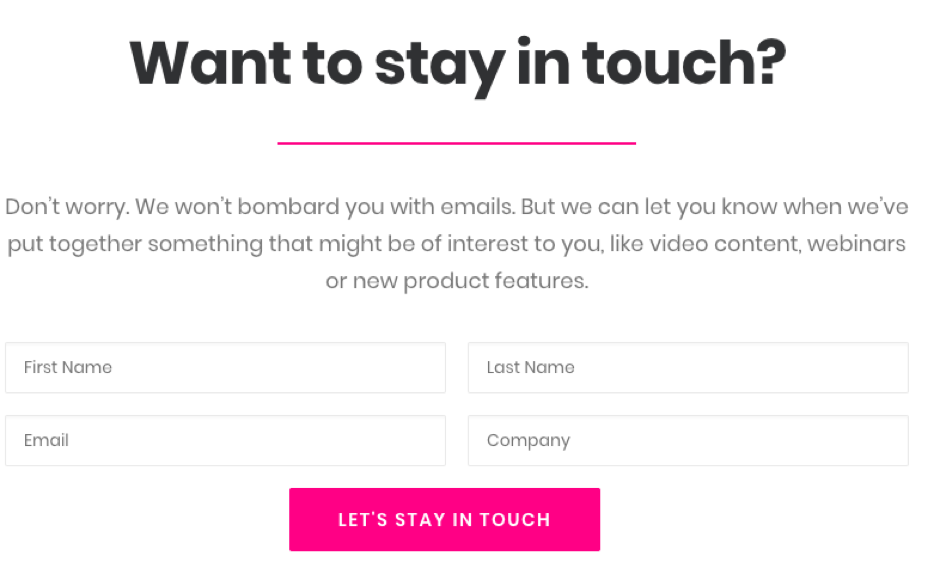

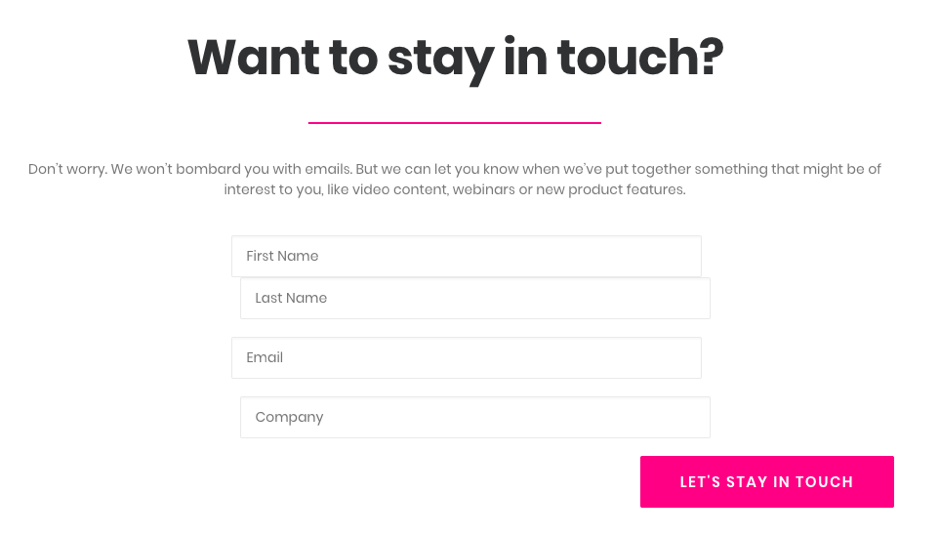

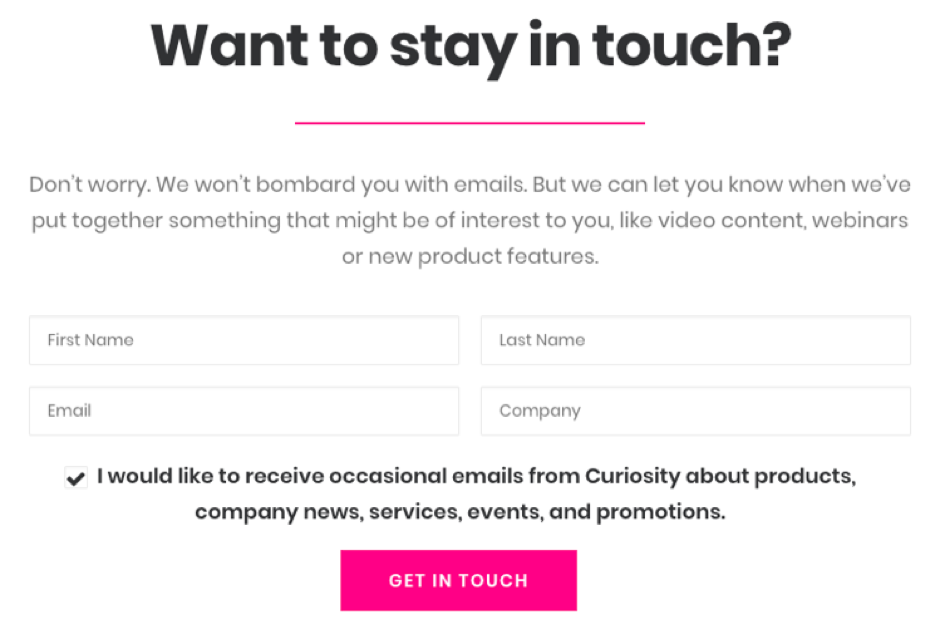

Modelling unifies UI testing and also improves the speed and rigour of each type of UI test. This article will use a simple example to show this, the ‘stay in touch’ form from the Curiosity website:

Figure 1 – A simple UI to test

Model-Based, functional testing is a great place to start when unifying UI Testing. It creates logical models that can then be used to generate several other types of UI Test.

Modelling also helps resolve many perennial challenges associated with functional test automation. This silo of UI testing often struggles with:

Manual test creation. Functional test automation usually relies on manual scripting. This is slow and complex, while UI Tests are repetitious by nature. They include many shared steps like clicking a “Submit” button. A skilled engineer might take 30 minutes to code one test. They then copy their boilerplate code over and over again. Faced with thousands of possible tests, this is too slow for short iterations.

Low test coverage. A simple set of UI screens can have thousands of possible paths through their logic. Each of these ‘paths’ might be a test case, and rigorous UI testing must test each distinct path. Manual test creation is unsystematic, leaving engineers little chance of achieving acceptable coverage. Tests leave unexpected scenarios and ‘negative paths’ particularly untested. This exposes UIs to costly bugs and time-consuming rework.

Invalid and incomplete test data. Every functional UI test requires matching test data for execution. This data must also be available on demand as otherwise bottlenecks arise. Nonetheless, testers still rely on large, low variety copies of production data. These are slow and complex to provision, and contain only a fraction of the data required. Out-of-date and invalid data furthermore destabilises functional tests, leading to automated test failures.

Test maintenance. Test maintenance is the greatest bottleneck in functional UI Testing. Scripted tests are extremely brittle to UI changes. They are hardcoded to identify elements using fixed identifiers, before exercising hardcoded actions. If an identifier changes, the script cannot run. If the logical journey through a UI changes, the test fails. Test engineers must then check and update EVERY test EVERY time a UI changes. Otherwise, invalid and out-of-date tests pile up.

Model-Based Testing removes these bottlenecks associated with the functional test automaton silo. It furthermore improves the coverage of functional UI Testing. This enables rigorous and agile functional testing.

Test Modeller enables such a Model-Based approach, generating automated tests from logical models of a UI. This avoids slow and repetitious scripting, while also enhancing functional test coverage.

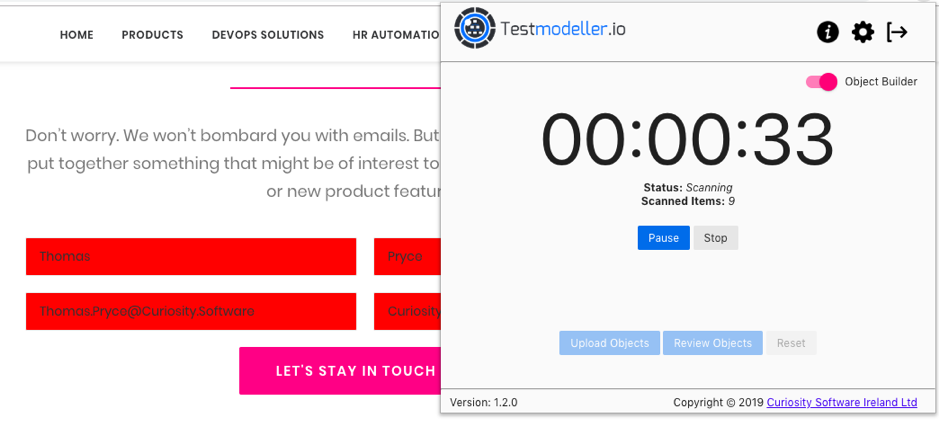

Creating models is quick, simple and automated. A browser extension converts UI scans into logical models. Testers simply select the elements to test:

Figure 2 – Using an application scanner to select elements from a web UI. Scanned

elements are highlighted in red

The application scanner captures every element attribute needed for automated UI testing. It detects element type, and every identifier that can locate the element. This includes link text, tags, IDs, class names and XPaths. Message traffic generated while scanning is also captured, along with test data variables.

Test Modeller automatically converts the element scans into executable automation modules. These contain a Page Object that includes the identifiers, data, logic and assertion. Test Modeller also creates the implementation to execute that Object.

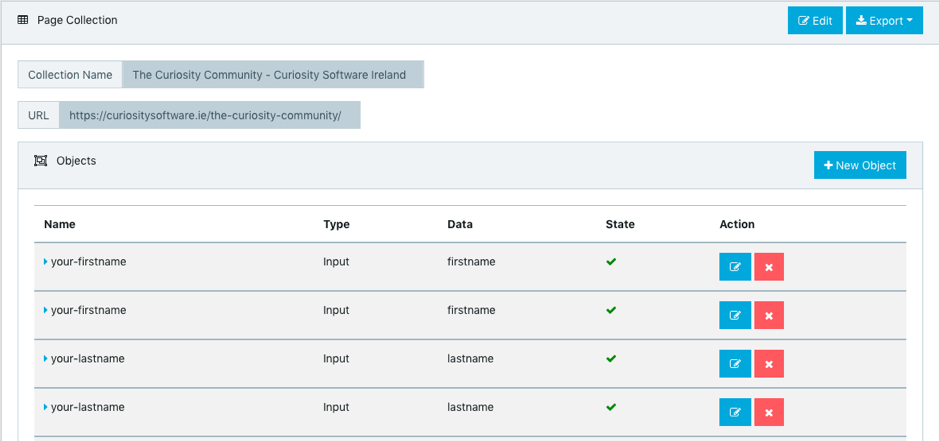

Figure 3 – An auto-generated page object in Test Modeller.

This approach already eliminates the challenge of manual scripting from functional test automation. Where it gets smart, however, is in the modelling.

Formally modelling the scanned elements drives up functional test coverage. The logical precision of the models enables the application of test generation algorithms. These algorithms create the smallest set of tests needed to “cover” all the logic in the models.

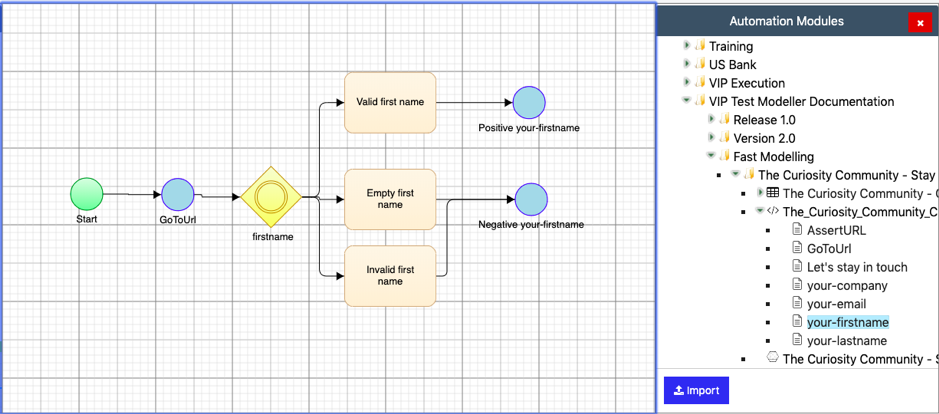

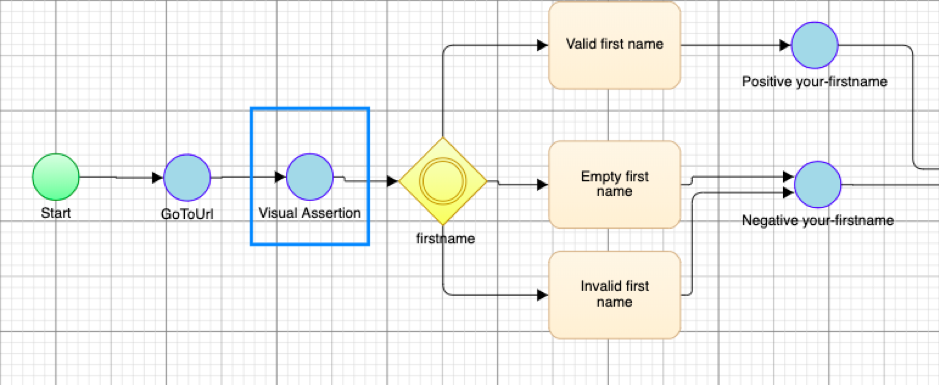

Building models with Test Modeller is again quick, simple and automated. A drag-and-drop approach arranges the automation modules created during UI scanning. These are then connected to form flowcharts:

Figure 4 – Importing test automation modules to flowchart models.

Intelligence accelerates the modelling process in Test Modeller. Automated modelling identifies test data and logic for elements as they are added.

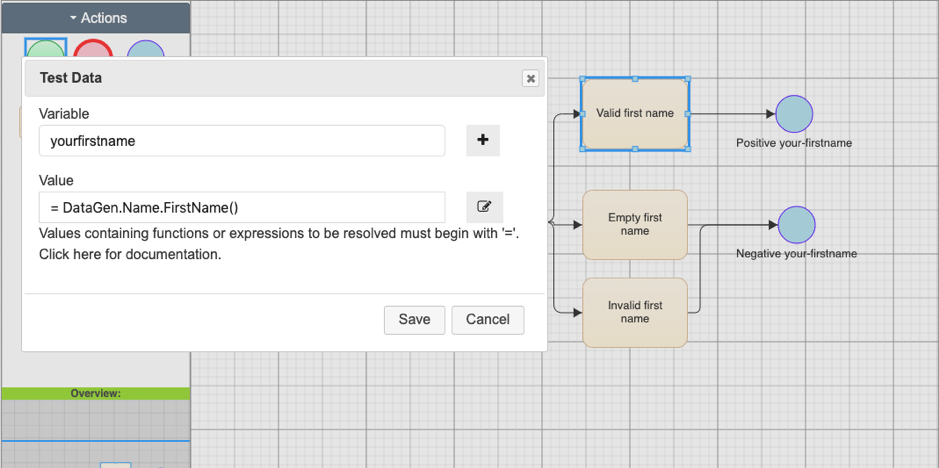

In Figure 3, importing the ‘first name’ input from the ‘stay in touch’ form has created a range of equivalence classes automatically. There is logic to test what happens when a user enters a valid first name and an invalid first name. The tests will also test the form’s validation when a user leaves the field empty.

Modelling a UI encourages a tester to consider both its positive and negative logic. Intelligent prompts in this approach help them to identify every journey a user can take. Generating UI tests from the models in turn resolves the challenge of functional test coverage.

Test Modeller furthermore identifies the data needed to test the UI logic. Every test generated from the model comes equipped with the test data needed to execute it. This up-to-date test data is found or made automatically during test creation.

Tester data definition is another automated process, handled by intelligent modelling. Each logical step in the model comes tagged with test data as it is added to the model:

Figure 5 – synthetic test generation functions, specified automatically for the logical model.

Test Modeller comes equipped with over 500 combinable data generation functions. These are identified automatically as a UI is scanned. In the above example, a data function has been generated automatically to create a valid first name in the “first name” text box.

The dynamic functions resolve “just in time” as each test is generated from the model. This ensures that every test comes with up-to-date test data with which to execute it.

Each test furthermore has different data associated with it. This better reflects real-world user activity, while again increasing functional test coverage. It creates data to test the edge cases rarely found in production data.

Modelling thereby avoids the bottlenecks caused by data provisioning and automated test failures. It also proves the quality of functional test data.

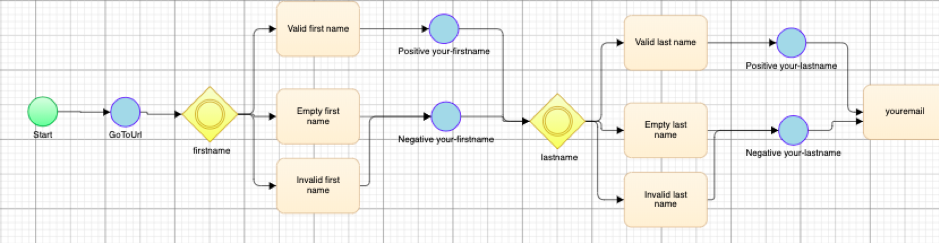

Completing the model of the “stay in touch” form is quick and easy using this drag-and-drop approach:

Figure 6 – Complete models of a UI, built rapidly from modelled scans.

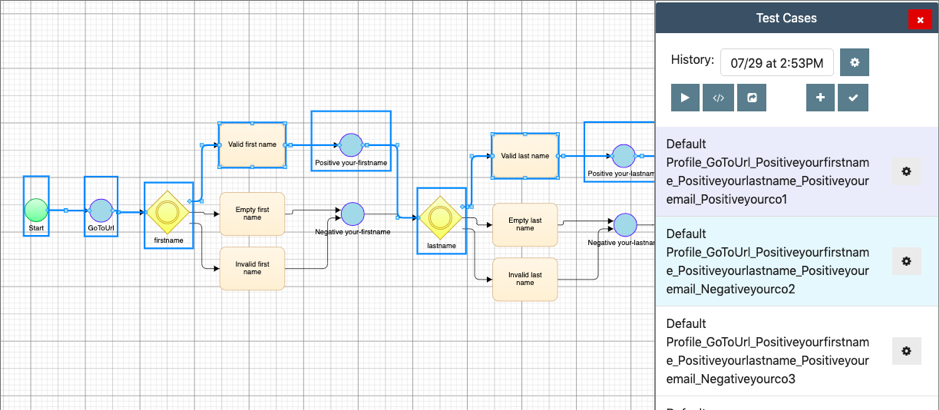

The mathematical precision of the flowcharts then enables automated test case generation. This is far quicker than creating tests by hand and also increases test coverage. Automated algorithms identify every ‘path’ through the logical model in seconds.

The test design algorithms work like a GPS identifying routes through a city map. Each path is the same as a test case:

Figure 7 – Automated test generation identifies “paths” through the logical UI models.

The algorithms generate the smallest set of paths needed for optimal coverage. That might mean testing every distinct journey through a UI’s logic. High-risk or critical functionality might also be focused on more exhaustively.

Testing in this approach detects UI bugs earlier, where they need less time and cost to fix. Test optimisation avoids wastefully over-testing the same logic, while retaining testing rigour.

The play button in the above screenshot compiles the test cases and data. This creates automated test scripts in one click. The scripts use the identifiers and assertions scanned earlier. This eradicates the need for slow and repetitious scripting.

Though simple, the script generation is capable of generating tests for complex systems. The Test Modeller supports C#, Python and Java generation. Code templates can also generate scripts that follow custom code architectures.

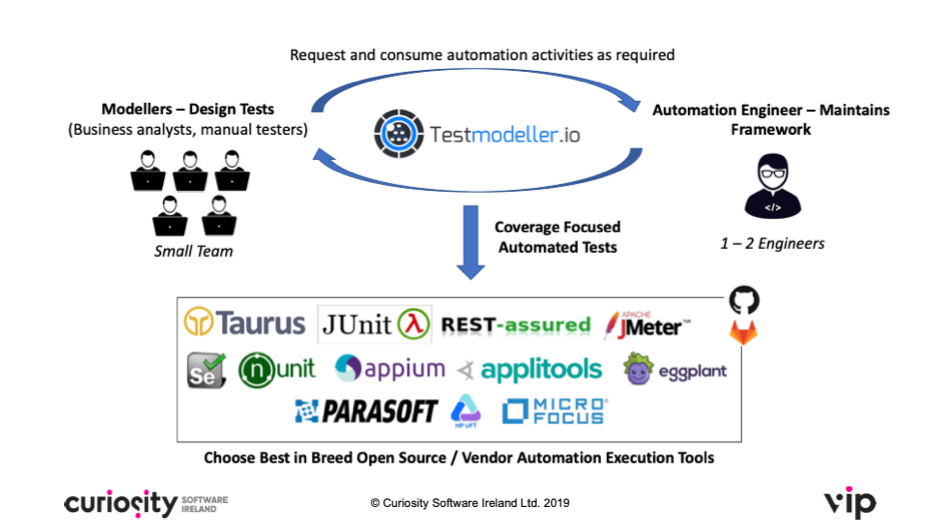

This enables code generation for homegrown, commercial and open source frameworks. The Test Modeller furthermore parses code from existing frameworks. Their actions, objects and assertions can then be re-used when defining new tests.

Testers without coding backgrounds can then collaborate with automation engineers. The engineers’ scripts become re-usable in the “low code”, script-less approach:

Figure 8- A small core of skilled engineers create custom code. This is re-used by

testers enterprise-wide.

This approach combines the simplicity of “low code” with the flexibility of scripting. It generates test scripts and data from application scans. Where UI logic requires new automation, automation engineers can feed in re-usable code.

Model-Based Testing can thereby support enterprise-wide functional test automation. It also avoids the challenges associated with functional UI Testing. This includes unnecessary scripting, poor test coverage, and test data bottlenecks. Test maintenance be discussed after seeing how a range of UI tests can be created from the same model.

Even in this approach, the scripted framework cannot yet assure UI quality. The tests remain incapable of testing certain factors. They cannot detect, for instance, visuals errors that disrupt a user’s experience.

Scripted functional frameworks act like computer processes, not human users. Even AI-driven frameworks are built with code, and work with code. They identify elements via XPaths, for instance, and execute coded actions against them.

Human users, by contrast, rarely see these coded identifiers. They work with the visual representations built on the code. This is why manual testers are often used for non-functional testing.

Standard scripted frameworks are thus blind to visual bugs. They cannot detect the misrepresentations of a UI’s code that so often frustrate users.

Imagine, for instance, that the submit button of the ‘stay in touch” has become misaligned. The padding of the input boxes has also broken:

Figure 9 – Visual defects in the Stay In Touch form.

Both are bugs that might deter a user from completing the web form. Yet traditional automation frameworks will not detect these high-impact bugs. The hardcoded tests will run without issue. They will still find the form’s input fields and submit button using the identifiers in the code.

Manual testers, by contrast, will notice the visual defects right away. But, creating a separate silo of manual testers is costly, and test execution slow. A more sustainable approach is to bridge the gap between automation and users. Automated tests must act more like human users: they need eyes.

Applitools Eyes uses a proprietary AI-powered Cognitive Visual Technology to do exactly this. This first-of-kind technology equips automated tests with vision. They then identify significant visual irregularities that cannot be explained resolution or device.

The visual assertions can be embedded in a wide-range of test automation frameworks. They are then performed each time regression testing runs. Any deviation in the visual appearance of a UI suggests a visual defect. Visual testing thereby becomes part of a standard regression pack.

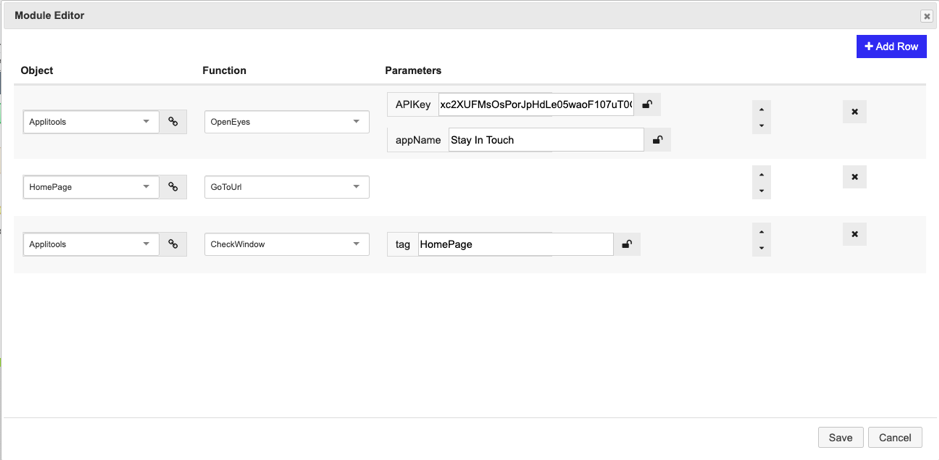

The assertions can be incorporated into models in Test Modeller. This is as easy as dragging and dropping a block to the flowchart:

Figure 10 – A visual assertion, added to the flowchart model of the “stay in touch” form.

A “low code” automation builder then defines the visual assertion:

Figure 11 – A visual assertion, defined using a “low code” automation builder.

In this example, a few user inputs are required to call Applitools Eyes, specifying the URL for the page under test. A “Check” is then applied. This compares the homepage to baseline images, performing an automated visual comparison.

No coding is required to add these visual assertions to the model. They are defined in existing automation code, which is then parsed by Test Modeller. Tester re-use the assertion, incorporating them into logical models.

Visual assertions can be performed at any stage of a functional test. This maximises both observability and testing rigour. You might want to just test how a page renders when it is first opened. For higher risk UIs, you might want to perform visual checks between test steps. This makes sure that the page appears correctly at each stage of a user’s journey.

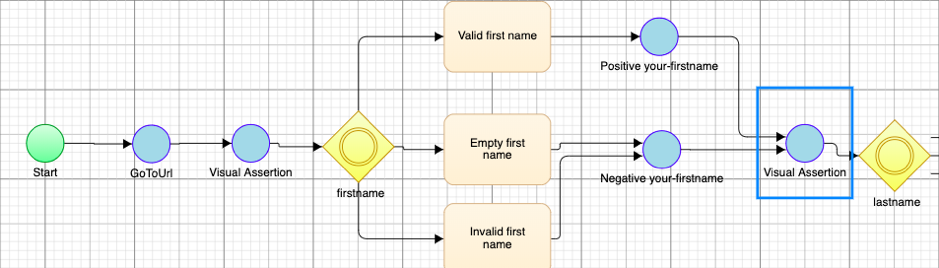

Defining mid-test assertions is as quick and simple. Testers can rapidly copy and paste the automation block throughout their model of a given page:

Figure 12 – Model-Based Test Automation with Visual AssertionsIn this example, a second visual check the appearance of the “stay in touch” form after a first name has been entered. This will check, for instance, the formatting of the input text.

Test Modeller automatically compiles the test code, complete with visual assertions. Executing tests is then as simple as re-generating the test cases and clicking the ‘Play’ button.

During execution, Applitools emulates the human eye, detecting any differences perceptible to users. These differences are presented in visual comparisons, providing clear test results. New baseline screenshots can also be selected at this point. This updates visual tests quickly after a UI has intentionally been changed.

Functional testing in this approach now also tests a UI for visual errors. The visual checks are furthermore introduced with minimal effort, and are maintained as part of the sprint cycle.

One Applitools capability, “Ultrafast Grid,” can expand the checks performed against the app in question to other operating systems, browsers, and viewports from within the Applitools service. The DOM snapshot from a given checkpoint is rerun on other browsers, operating systems and viewport sizes. Testers can therefore use the Ultrafast Grid to expand the breadth of UI testing, without having to rerun functional tests on multiple platforms.

At this point, minimal effort has combined automated functional testing with visual testing. This already removes the excess time and cost of manually performing visual checks. It has increased the speed and rigour of functional test automation.

This Model-Based Approach is easily extendable into additional types of UI Testing. This can be seen by adding automated Load testing to our example.

Generating Load tests from the flowchart applies the same approach as visual testing. It re-uses the logical model, defining automation to execute the Load tests. This again re-uses code from existing frameworks, requiring only a few user-defined parameters.

Subflows enable this re-usability of logical models. Each time a model is created for a UI in Test Modeller, it joins a central repository of models. These models can then be dragged-and-dropped to create new models.

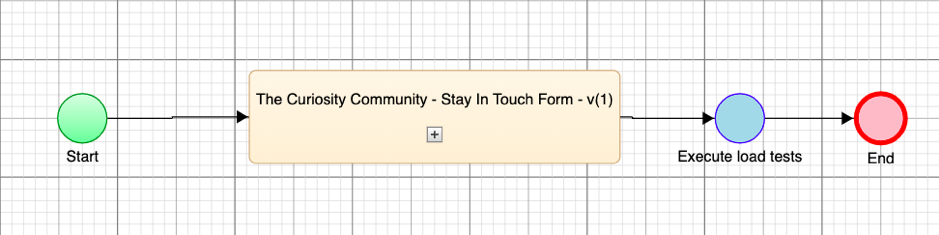

A logical model created for functional testing can then be re-used to generate Load tests:

Figure 13 – A logical model is re-used rapidly as a subflow.

In this example, an automation block has been created to execute the Load tests. This takes the data values specified in the subflow, feeding them into the generated Load tests.

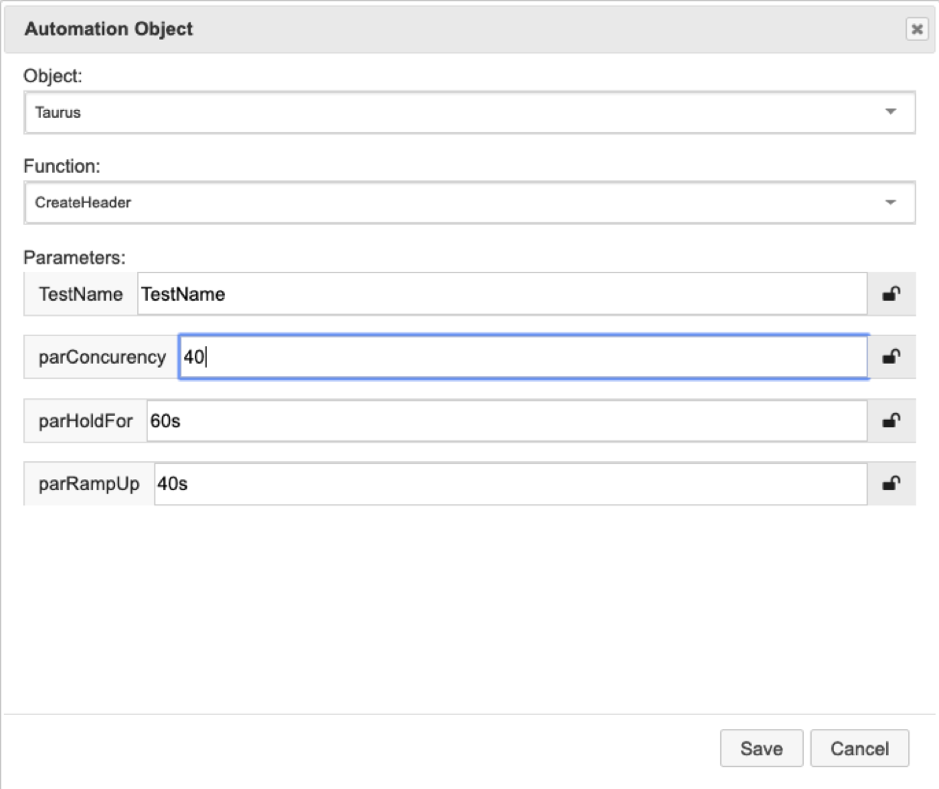

Test generation again requires just a few user inputted parameters. A “low code” builder defines the message data and then the Load test parameters:

Figure 14 – A “Low code” builder defines the concurrency, hold time and ramp up time

for the Load tests.

The Load test scripts are then generated and executed in the click of a button. This again generates scripts for a wide-range of Load testing frameworks. Script creation once more leverages code that has been parsed from existing frameworks. In the above example, tests are defined for Taurus.

Logical models accordingly create a “one-stop-shop” for UI testing. In this example, they have removed the need to script both functional tests and Load tests. Modelling has also eradicated the delays created by manual visual checks.

The same approach also enhances the quality of UI testing. Optimised functional and Load tests cover the full range of logically distinct user journeys. They also come equipped with the test data needed to execute them. Visual assertions meanwhile check a UI’s appearance at every stage of a user journey.

In the siloed approach to UI testing, a change made to a UI can cause chaos and vast duplicate effort. Imagine that a new consent checkbox has been added to the “Stay in Touch” form. Imagine also that the Button element has been updated, including its name and element tag. Both are now “Get in Touch”, rather than “Stay in Touch”:

Figure 15 – Changes have been made in both the appearance and the logic of the UI

This will create significant manual effort for each testing silo in the traditional approach:

Applications today change fast, with code commits made monthly, weekly or daily. There is no time to repeat the effort of creating and executing tests. There is especially no time to repeat that effort in several testing silos.

Model-Based Testing automates this test maintenance. The models create a layer of abstraction above the test artefacts. The functional tests, Load tests and visual tests are all thus traceable to the models.

This layer of abstraction created means that existing UI tests become throwaway assets. Logical changes made to a UI only then need to be reflected in the model, before re-generating an up-to-date set of tests in minutes.

If a new element is added to a UI, testers can re-scan it rapidly and import it to their models. If an identifier changes, the maintenance of automated tests is again automated. Scanning elements with Test Modeller detects several locator for each element. When one identifier changes, automated tests can then fall back on another locator. The intelligent automated test scripts additionally “self-heal”, detecting and updating invalid locators.

A simple example has demonstrated the power of Model-Based Techniques for UI Testing. Models create a one-stop-shop for testing UIs, avoiding the need to test UIs in silos.

A complete range of UI tests are instead generated from the same central models. The optimised tests and data furthermore update with the central models. The result is rigorous UI testing that keeps pace with tight release cycles.

See this approach in practice:

[1] https://www.thinkwithgoogle.com/marketing-resources/data-measurement/mobile-page-speed-new-industry-benchmarks/

System models: there’s lots of different techniques today, but where is their true value for testers and developers? Here’s five ways that I’ve found...

Despite increasing investment in test automation, many organisations today are yet to overcome the barrier to successful automated testing. In fact,...

Welcome to the final instalment of 5 Reasons to Model During QA! If you have missed any of the previous four articles, jump back in to find out how...

There is a lot of buzz within the software testing and development communities about Chat GPT, and the role of generative AI in testing.

The project, shortlisted for the "Best Use of Technology in a Project", reduced the time needed to create and run automated tests from weeks to...

The 2020/1 edition of the World Quality Report (WQR) highlights how the expectation placed on test teams has been growing steadily. QA teams today...

Welcome to Part 5/5 in our “Scalable Mobile Test Automation" series!

Continuous Integration (CI) and Continuous Delivery or Continuous Deployment (CD) pipelines have been largely adopted across the software development...

The QA community has been buzzing this past month as its members and vendors respond to Angie Jones’ insightful article, 10 features every codeless...